The Gestation of the Hubble

14 min read

The Gestation of the Hubble

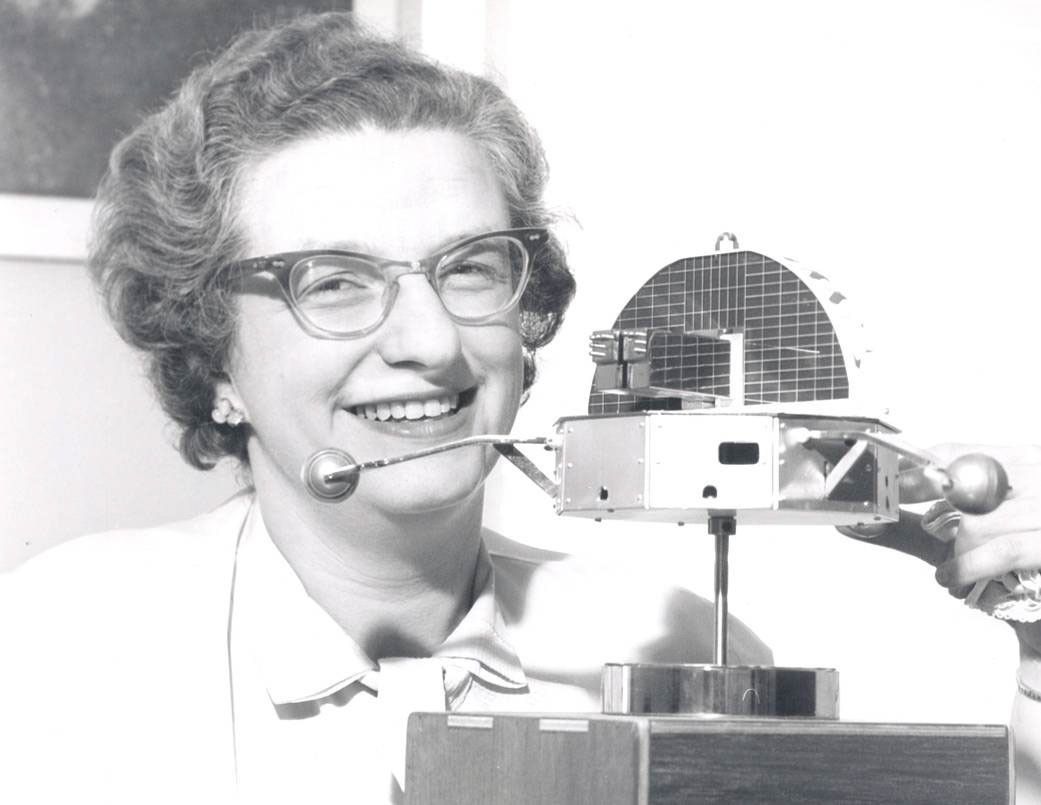

By Nancy Grace Roman

Looking through the atmosphere is like looking through a piece of old stained glass. The glass has defects that distort the image. The atmosphere also has defects that distort the image, but the defects in the atmosphere move, thus blurring the image as well. The glass is colored, so only some colors get through.

Until the mid-20th century, that did not appear to be a major problem. Stars primarily radiated like black bodies, and their temperatures were such that their radiation came through the atmosphere and our eyes adapted to seeing it. The development of radio astronomy, as a result of the technology stimulated by World War II, proved that the universe was far more complex and far more interesting than the staid view in the visible. This made astronomers eager to detect colors that do not come through atmosphere. In addition, the glass is dusty. The dust scatters light making the background brighter and harder to see through. The molecules in the atmosphere also scatter light. This is why we cannot see stars in the daytime. It also keeps us from seeing the faintest stars at night. Finally, unlike the glass, the atmosphere shines faintly, making the faintest objects invisible from the ground.

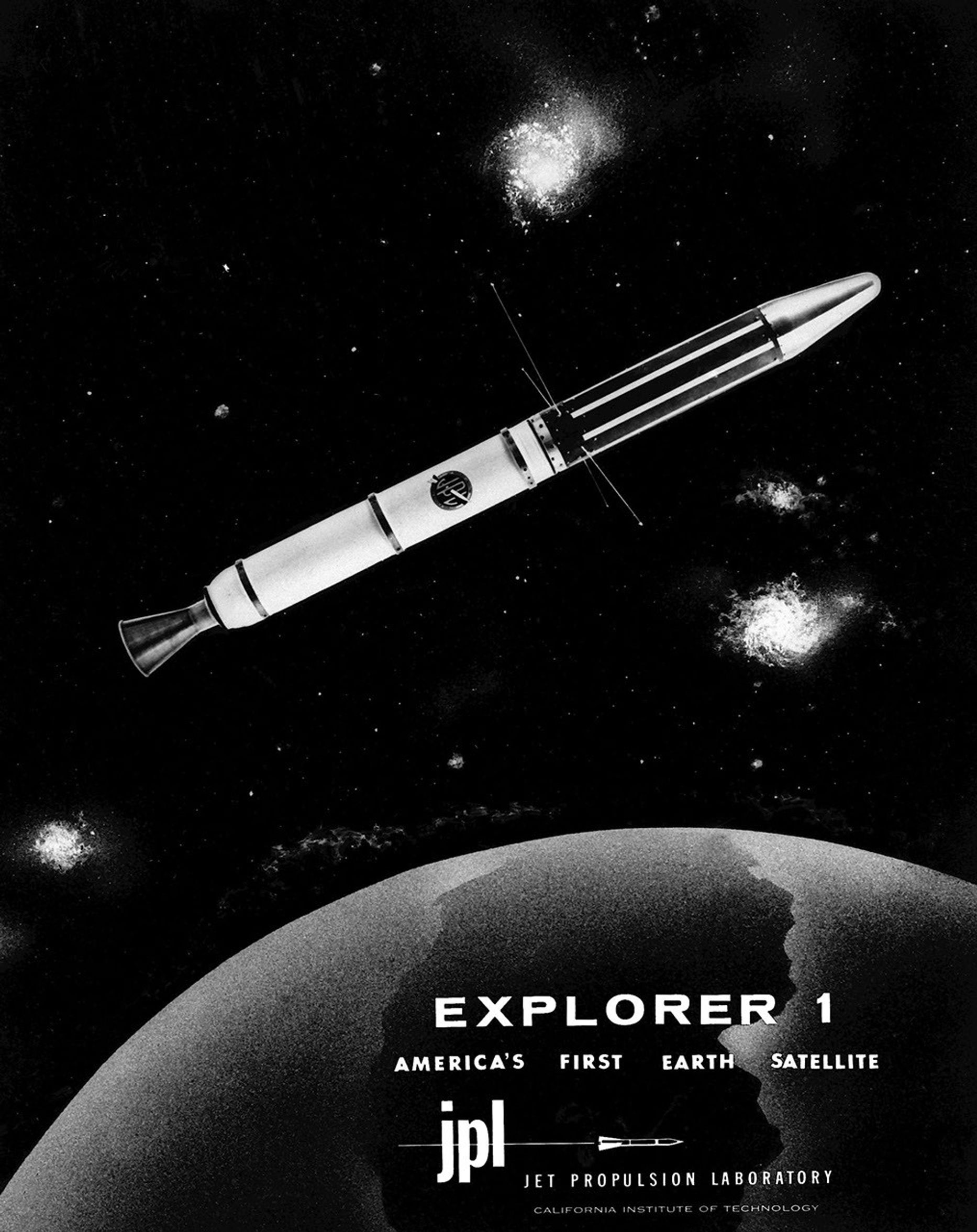

For these reasons, astronomers had been anxious for decades to put telescopes above the atmosphere, and they jumped at the opportunity provided by the opening of the Space Age. The first NASA astronomy missions hunted for high-energy radiation in gamma ray and X-ray regions of the spectrum. These searches relied on techniques that had been developed for decades for the measurement of cosmic rays and for studying high-energy phenomena in laboratories.

We knew from rocket observation that the Sun displayed interesting effects in the ultraviolet that changed continuously. This was an impetus behind the Orbiting Solar Observatories. Stellar astronomers were also interested in the ultraviolet. Young, massive stars emit most of their energy in that region. In addition, the strongest and simplest lines of common, light elements are in the ultraviolet. Without observations of these lines, it was impossible to analyze the compositions of stars. This led to the development of the Orbiting Astronomical Observatories with their emphasis on the ultraviolet of stars. We were less interested in the infrared at that time, and detector technology was too primitive to make this region easily accessible.

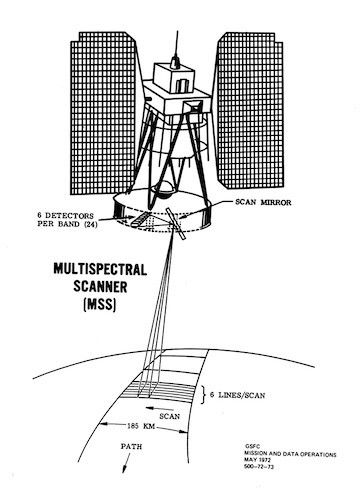

These instruments provided an exciting introduction to space astronomy, but astronomical objects are very distant. That makes them appear faint and tiny. A large mirror is required to collect enough light to analyze any but the brightest stars. The fineness of the detail that is discernible is a direct function of the size of the mirror. Thus, to take advantage of the dark sky and steady images above the atmosphere requires a large mirror. For decades, astronomers had longed for a large space telescope. In 1946, Lyman Spitzer wrote a short paper for the Rand Corporation describing the science that could be learned with a 4-meter telescope in space. This is generally considered the impetus for such a telescope in the U.S.

From time to time, NASA asks the National Academy of Sciences (NAS) for advice on its science program. In the summer of 1962, the Academy assembled a group of scientists at the University of Iowa, dividing the group into various committees representing different areas of science, including one for astronomy. One astronomer had studied the characteristics of the Saturn rocket and determined that it could carry a 3-meter telescope. The entire astronomy committee jumped on the idea. That is what they really wanted. I thought that it was too early to start work on such a project. I knew how much trouble we were having trying to develop a satellite and instrumentation for a 6-inch telescope. This telescope was not successful until 1968. Thus, I essentially ignored the idea.

At that time, NASA’s Langley Research Center (LaRC) was responsible for NASA’s human space program. Some of the engineers there jumped on the idea of developing a large, manned orbiting telescope. The NAS conducted another study in the summer of 1965. By this time, the astronomers only argued about whether the telescope should be in orbit or on the Moon. The latter would provide a stable base, making the telescope less sensitive to the motion of parts, and also provide a reference system for the pointing controls. Connected to a manned base, it could be used much as ground-based telescopes are used. There were also disadvantages with the Moon. Perhaps the most serious one was that it was unclear how soon such an installation would be feasible. The Moon appeared to be undesirably dusty. Moreover, its motion is complex, making the guidance difficult before modern computers were well developed. Nevertheless, the issue remained alive until the early 1970s.

Several aerospace companies were intrigued by the LaRC idea and presented designs for a manned, large space telescope. This was the last thing astronomers wanted! Aside from the fact that research had not been done by a person looking through a telescope for almost a century, with one small exception, a man needed an atmosphere, and that was what we were trying to get away from. In addition, a man would wiggle during long exposures and that would cause the telescope floating in orbit to wiggle in the opposite direction, blurring the image. I still thought it was too early to design a satellite for a 3-meter telescope, but decided that if companies were going to spend money designing such a satellite system, they might as well design a usable one.

A major problem at this stage was to win the support of the general astronomical community, many of whom had no interest in observations from space. One facet of attacking this problem was to set up a working group under the auspices of the National Academy of Sciences (NAS) on the uses of a Large Space Telescope (LST), under the direction of Lyman Spitzer.

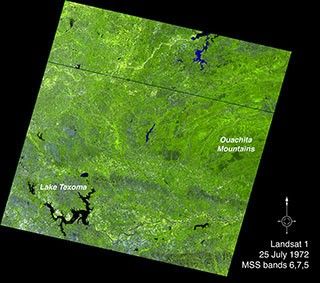

The committee held an early meeting in Pasadena, California, to discuss the use of such a telescope for studies of galaxies, cosmology, and interstellar matter. Numerous West Coast astronomers attended the meeting, increasing their understanding of the possibilities and, hence, somewhat decreasing their antipathy. Although the members of the working group were supporters, the cachet of the NAS gave their report, which was published in 1969, special importance. I met with many astronomers to discuss the promise of a 3-meter telescope above the atmosphere. I addition, I gave many illustrated public talks on the questions that we expected such a telescope to answer, although I also emphasized that the most important results would be those we could not predict.

The Astronomy Working Group that had been established to advise me on the entire astronomy program also started to discuss what was really needed for a successful LST and the engineering problems that required solution. By 1971, I assembled an LST Science Steering Group to work only on the LST. For this, I assembled a group of astronomers from all over the country representing various interests that could be served by a large space telescope and some NASA engineers to sit down and outline a design that would meet the needs of the astronomers and that the engineers thought would be doable. Purposely, I included several who were not really enthusiastic about the project but whose science could benefit from the program. Together, we sketched the system that would become the basis for the Hubble.

After about 2 years, a more detailed design was needed. NASA’s Marshall Space Flight Center was assigned the responsibility for turning our sketch into a design. I maintained a general overview of the continued developments as program scientist, but Robert O’Dell was hired in September 1972 as the project scientist, with the detailed responsibility for keeping the scientific requirements at the center of the planning.

At one point, there was a strong push to decrease the diameter of the mirror, probably to make use of facilities that existed for other purposes. We were asked to consider mirror sizes of 2.4 m and 1.8 m. A primary objective of the telescope was to determine the brightness of Cepheid variables in the Virgo cluster of galaxies. Hubble had shown that the velocity of recession of distant galaxies was proportional to their distance. However, the proportionality constant was uncertain by a factor of two. Galaxies have random motions. The velocities of distant galaxies are small compared to the velocity caused by expansion, but for nearby galaxies, these random motions overwhelm the general expansion. Moreover, the nearby galaxies are in a group in which they interact gravitationally.

To determine the proportionality constant it was necessary to determine the distance of a cluster of galaxies not interacting with nearby galaxies and distant enough that the random velocities are not significant on the average. The nearest suitable cluster is the Virgo cluster of galaxies at a distance of about 54 million light years. Henrietta Leavitt had shown that the brightness of a particular class of variable stars, called Cepheids, was an accurate function of the periods of variation. We could calibrate this relation for Cepheids in the Milky Way galaxy. Thus, if we could observe these variables in the Virgo cluster, we could determine the distance of the cluster. Measuring the velocity of the expansion was easy. I and, independently, several others determined that with the available detectors, we could reach the Cepheid variables in the Virgo cluster with a 2.4 m mirror but that we could not do so with a 1.8 m mirror. Dropping the mirror diameter to 2.4 m also made the design of a satellite that would fit the space shuttle easier.

As the early design developed, it was necessary to make a place for the project in the NASA plans. It was relatively easy to convince my superiors in NASA that such a telescope would be worth the cost. Convincing the political community, with little understanding of science was more difficult. James Webb, the administrator of NASA at that time gave a series of dinners for men with political power. After each dinner, three of us presented a “dog and pony show.” Jesse Mitchell discussed the engineering and its feasibility, Dick Halpern presented the management plans, and I described the scientific research we expected to do with the telescope. I never testified before Congress, but I did write congressional testimony to justify the Large Space Telescope for about 10 years. I also pitched the case for the telescope to representatives of the Bureau of the Budget (now the Office of Management and Budget), the agency that prepares the budget the president sends to Congress. At some point, for political reasons, the word “Large” was dropped from the name with the satellite simply becoming the Space Telescope until launch.

In spite of these efforts, Congress continuously postponed approval for construction. Even after construction was started, Congress cut the budget below an optimum level. Of course, this increased the final cost of the mission. By the early to mid-1970s, astronomers organized major lobbying efforts. This finally led to the approval of the project.

At one point, then-Sen. William Proxmire (D-Wisconsin), noted for ridiculing government funding that he considered frivolous, asked NASA why the American taxpayer should support an expensive telescope. I did a back-of-envelope calculation and determined that for the cost of one night at the movies, every American would have 15 years of exciting discoveries. I was probably off by a factor of four or five, depending on how launch and servicing costs are allocated, but we shall probably have 25 years of discoveries. Even at a cost of a night at the movies once a year, which would more than cover costs by any accounting, I believe that most Americans believe that the expenditure has been worth it.

At the time the Hubble was being designed, NASA was pitching the space shuttle as a cheap way to launch spacecraft. To lower the costs, a busy launch schedule was required. Therefore, all satellites were designed to be launched by the shuttle and several were designed to be serviceable. The Hubble was scheduled to be launched by the next flight after the Challenger accident. That catastrophe cancelled all shuttle launches for 3 years, during which the satellite was kept in storage and a knowledgeable group of engineers kept on the payroll until the 1990 launch. These 3 wasted years also added significantly to the cost of the mission.

The Challenger experience caused NASA to rethink its use of the shuttle for most missions. Most payloads had to be redesigned for robotic launches. Fortunately, the Hubble was too far along to be changed. The ability to service it with the shuttle not only saved the basic mission after the mirror problem was discovered, but also provided the possibility of replacing instruments from time to time by more modern versions, thus greatly increasing the capability of the telescope.

As mentioned earlier, I started funding development of detectors early in the program. A major portion of the funding for ultraviolet detectors went to Princeton University which subcontracted to Westinghouse for the development of an intensified vidicon for the telescope camera. The Steering Group, and later the Working Group, assumed that this detector was already chosen. As the time approached for the selection of the scientific instruments for the telescope, I was unsatisfied with the progress on the intensified vidicon. At a Steering Group meeting shortly before the selection of the instruments, I arranged a presentation of various types of detectors.

Charged coupled devices (CCDs) had clear advantages in resolution, sensitivity, and stability. These are arrays of tiny, solid state chips (pixels) each sensitive to photons. At the conclusion of an exposure, the intensity recorded by each chip is read sequentially down a column, and then the sums are read across. In this way, a map of intensity as a function of position, that is, a picture is obtained. Commercial establishments were strongly interested in supporting their development. (They are the basis of the modern digital camera and are also used for TV cameras.) A problem is that a bare CCD is not sensitive in the ultraviolet. Nevertheless, as a result of this presentation, the Working Group decided to open the choice of detector for the camera. When a proposal from Jim Westphal solved the ultraviolet sensitivity problem by coating the CCD surface with an organic substance that fluoresced in the visible when hit with ultraviolet light, the vidicon lost the competition.

Many in the astronomical community were unhappy with NASA management of the Space Telescope. They wanted it in the hands of astronomers with a management contractor in the way that the National Optical and Radio Observatories were handled. This overlooked the fact that the scope of the LST construction and operation was far larger than that of the ground-based observatories. Nevertheless, there was one area in which the community insistence on operation by scientists was non-negotiable – the scientific management of the operation. This nearly cost me my job. Goddard badly wanted the scientific operation of the telescope. After considering this, I decided that it was much too big a job for the small astronomy group at Goddard, even if the astronomical community would have stood still for such an arrangement. As a result, the scientific and astronomy leaders at Goddard talked Noel Hinners into to transferring me to a different job. I decided that I did not want the other job and stayed put for a year or so.

I took advantage of an early-out period to retire in 1979, but continued for 9 months longer as the Space Telescope program scientist in order to participate on the Source Selection Board for the Space Telescope Science Institute, which would manage the scientific operations of the Space Telescope. I found this an interesting experience. There were five proposals, four of which based the Institute at Princeton University. The proposals from Associated Universities Incorporated, which managed the National Radio Astronomy Observatories, and from Associated Universities for Research in Astronomy, which managed the National Optical Astronomy Observatories, were highly competitive, and the decision between them was difficult. The latter, placed the Institute at Johns Hopkins University in Baltimore. Many people believed that it was selected because Baltimore is closer to Goddard. That has helped over time but did not enter our deliberations.

I left the project before substantial management problems arose, leaving their solution to my successor, Ed Weiler. He also had to handle the discovery of the mirror problem. It was clear from his actions in these major fiascos that I had left the project in good hands.

About the Author

-

Nancy Grace Roman

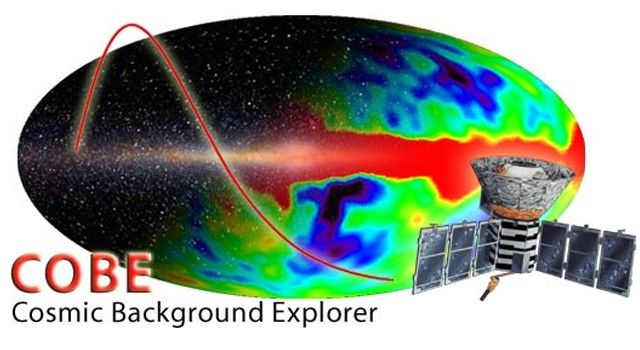

Nancy Grace Roman received her Ph.D. in astronomy from the University of Chicago in 1949. She joined NASA in 1959 and became the first chief of astronomy in the Office of Space Science, where she had oversight for the planning and development of programs including the Cosmic Background Explorer and the Hubble Space Telescope. Dr. Roman finished her NASA career at the Goddard Space Flight Center, retiring as manager of the Astronomical Data Center in 1979, and continued to work at Goddard as a contractor. The first woman to hold a leadership position at NASA, Dr. Roman has been an advocate for woman in the sciences throughout her career.

Keep Exploring

Discover More Topics From NASA

Powered by WPeMatico

Get The Details…